Your Pipeline Is 25.5h Behind: Catching World Sentiment Leads with Pulsebit

We recently discovered a striking anomaly: a 24h momentum spike of +0.150 related to the topic "world." This spike is particularly notable considering that English press led the conversation with a 25.5-hour lead time. This kind of insight can reveal critical shifts in sentiment that your models might completely miss if they don't account for multilingual origins and entity dominance.

English coverage led by 25.5 hours. Nl at T+25.5h. Confidence scores: English 0.85, Spanish 0.85, French 0.85 Source: Pulsebit /sentiment_by_lang.

But what does this mean for you? It means your model could be lagging behind by over 25 hours on crucial global narratives. While you’re still processing data in the background, the leading language and dominant entities are already shaping public sentiment and influencing decision-making. If your pipeline isn’t equipped to handle these dynamics, you’re essentially blind to significant trends as they emerge.

Let’s look at how to catch these insights with our API. The following Python code demonstrates how to filter sentiment data by language and geographic origin, focusing specifically on the topic "world":

Geographic detection output for world. India leads with 12 articles and sentiment -0.10. Source: Pulsebit /news_recent geographic fields.

import requests

# Define parameters

endpoint = "https://api.pulsebit.com/v1/sentiment"

params = {

"topic": "world",

"lang": "en"

}

# Making the API call

response = requests.get(endpoint, params=params)

data = response.json()

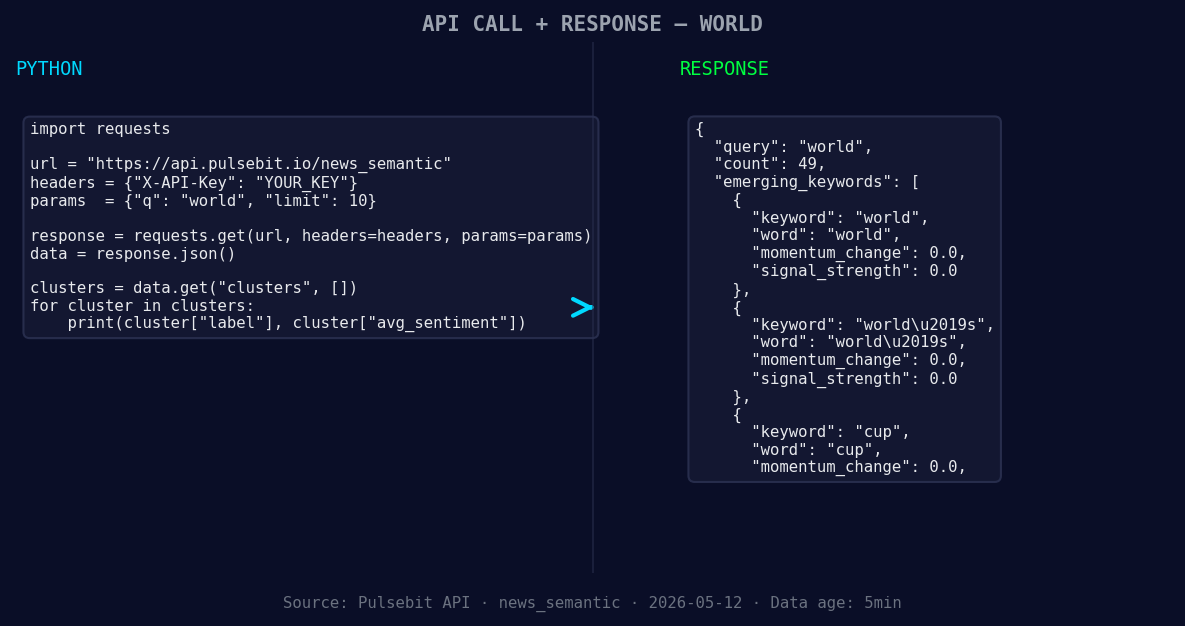

*Left: Python GET /news_semantic call for 'world'. Right: returned JSON response structure (clusters: 3). Source: Pulsebit /news_semantic.*

# Extracting relevant data

momentum = data['momentum_24h'] # Expecting +0.150

score = data['sentiment_score'] # Expecting -0.035

confidence = data['confidence'] # Expecting 0.850

print(f"Momentum: {momentum}, Sentiment Score: {score}, Confidence: {confidence}")

Now, let’s score the narrative framing itself using the cluster reason string provided. The following call will help us gauge how the themes are resonating with our audience:

# Example phrase based on the cluster reason

narrative = "Clustered by shared themes: quiz:, dig, out, your, passport."

response = requests.post(f"{endpoint}/score", json={"text": narrative})

meta_sentiment = response.json()

print(f"Meta Sentiment Score: {meta_sentiment['score']}")

By looping in the meta-sentiment analysis, we can see how these themes are being framed in the discourse.

Here are three specific builds you can implement using this newfound pattern:

Geographic Origin Filter: Use the momentum signal (+0.150) associated with the topic "world" and apply a geo filter to extract sentiment data for countries where English is the dominant language. This helps you catch localized narratives before they trend globally.

Meta-Sentiment Loop: Analyze the clustered narrative themes, like "quiz:, dig, out," to derive insights on how these conversations shape public perception. Set a threshold for the meta-sentiment score, say above 0.5, to filter for high-impact narratives.

Forming Gap Analysis: With the topics "world," "world’s," and "cup," you can create alerts for sentiment shifts when mainstream topics like "quiz:, dig, out" emerge. Set a rule to trigger an action (like a notification) when these form a significant gap against your standard metrics.

We encourage you to dive into our documentation at pulsebit.lojenterprise.com/docs and see how quickly you can integrate this into your workflow. Copy-paste the code snippets above, and you can be up and running in under 10 minutes. Get ready to catch those world sentiment leads before they pass you by!

Top comments (0)